Companies that research, produce, store, transport or sell pharmaceutical products are subject to regulatory requirements. The most common known practices are:

The laws and regulations are specific to each country and typically include a national or international authority like the FDA (Food and Drug Administration) in the United States); EU (European Union) in Europe; or Swissmedic (in Switzerland), all coordinated by the ICH (International Council for Harmonisation of Technical Requirements for Pharmaceuticals for Human Use).

In addition to official regulations, there are a number of important associations issuing guidance documents supporting and detailing the regulations and their application in specific situations. Some of the most influential organizations relevant to the pharmaceutical supply chain industry are the ISPE (International Society for Pharmaceutical Engineering), the USP (United States Pharmacopeia), the PDA (Parental Drug Association) and the WHO (World Health Organization). The regulatory framework is therefore a living organism which changes almost daily with new laws becoming effective and new guidance documents being published.

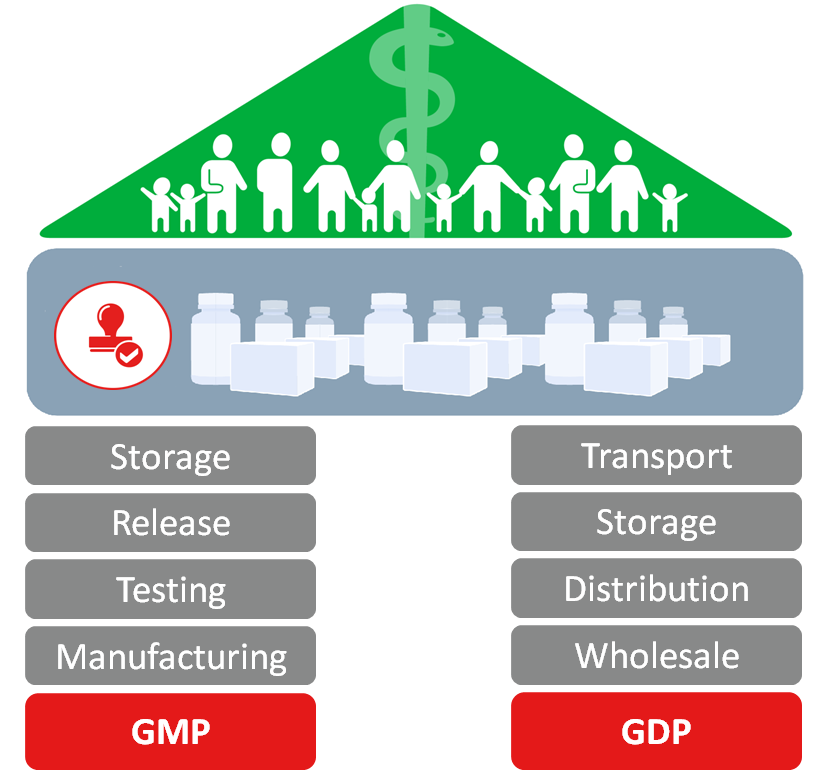

From a drug supply chain perspective, the cornerstones of the regulatory framework are the Good Manufacturing Practice system (GMP) and the Good Distribution Practice system (GDP). These are often referred to jointly as GxP. While GMP lays its focus on activities around manufacturing (including testing, release & storage), GDP focuses on the distribution including transportation, storage and wholesale of pharmaceuticals products. Both, GMP and GDP aim to increase public health by ensuring the product quality.

In a nutshell

If you store or transport pharmaceutical products you have to comply to GMP and GDP guidelines. Thus, you must ensure that:

- products are produced, handled, stored and transported in qualified facilities.

- the temperatures must be monitored by a compliant monitoring system (audit trail).

- the sensors must be calibrated regularly.

If a company transports pharmaceutical products and wants to comply to GDP guidelines it must store and transport the products in qualified facilities, transport containers and networks. The temperature sensors must be calibrated and the product release must take place in a qualified and complaint system. What does compliance in combination with a temperature monitoring solution mean? In this chapter, we will explain compliance from a Cold Chain perspective.

“Title 21 CFR Part 11” is the part of the Title 21 of the Code of Federal Regulations written by the United States Food and Drug Administration (FDA). Title 21 contains regulations on electronic records and electronic signatures. Part 11 defines the criteria by which electronic records and electronic signatures are considered trustworthy, reliable, and equivalent to paper records to ensure GxP compliance.

A monitoring solution which stores electronic records which are critical to patient safety must be in compliance to Title 21 CFR Part 11. In order to do so it is important to understand the main risks.

Electronic data could be deleted, accidentally modified or intentionally modified. Title 21 CFR Part 11 defines criteria by which electronic data is trustworthy, reliable and equivalent to paper records and handwritten signatures executed on paper. If you follow those rules your electronic records will be complete, intact, maintained in the original context, and geared towards compliance. In the context of a Cold Chain monitoring solution this means the following:

Complete Data – Monitoring temperature with the help of sensors, a communication bridge and the software solution, one of the main challenges is the completeness of data. Mechanisms need to be in place to ensure compliance so that no data is lost on the way from the wireless sensors through the communication bridge to the monitoring software. Therefore, in case of a disconnection between the sensors and the radio bridge or the cloud storage, data must be buffered in the sensors until the cloud confirms that the connection has been re-established and the data has arrived.

When monitoring data in a Cold Chain environment the completeness of the data is THE main concern and cause for problems. Therefore, the Cold Chain database should include mechanisms to mitigate the following risks:

Intact Data – Although the risk for accidental or intentional modification is minimal, the integrity of data in a measurement chain can only be achieved by encrypting the data all the way from the measuring wireless sensor through the communication bridge (LPWAN network or email) to the cloud. Once the data has arrived in the software it is important that no raw data can be deleted or modified. No user should not be able to change the raw data, however it is possible to add certain types of additional information. For example, in order to add an interpretation of the data, certain comments or acknowledgements about the raw data can be added to the system. Furthermore, in order to create selective views on the raw data, reports can be created and exported.

Maintaining Electronic Data in its Original Context – Keeping the data in one single source on a central cloud infrastructure ensures that it is kept in its original recorded context and the risk of misinterpretation is therefore eliminated. Warnings, alarms, and reports should always refer to the unique sensor name, event number, and time stamp.

There are many rules to follow when it comes to compliance in user management. Every user with access to the solution must be identified by a unique username and password and must have a clear role and rights. Additionally, every action taken by the user in the system must be identified and tracked. When conducting critical operations, such as the acknowledgement of an alarm, the user even needs to confirm his action by inserting his password a second time. In order to avoid unauthorized access it is important to implement a time-out mechanism in case the user is not taking action for a longer time period.

The result tracking functionalities mentioned above is a complete audit trail aligned with compliance. It answers the questions: who has done what and why? Technically, the audit trail keeps track of every single automated event the system is generating and every single manual task a user is performing. So, regardless from which perspective one takes a look into the system, a full audit trail could be:

In a Cold Chain database the question of audit trail is much more complex than for a solution monitoring rooms and equipment. Why? Because there are many more participants included.

A temperature monitoring system typically executes the following different automated mechanisms and workflows:

In addition to automated events, the system must keep track of every single manual task a user performs including the time stamps of each task. The following manual events could be tracked:

In a Cold Chain database, the question of an audit trail is much more complex than for a solution monitoring rooms and equipment. Why? Because there are many more participants included:

The Cold Chain database must keep an audit trail aligned with compliance and verify who has done what, and why? Yet even more important is to limit the user rights, preventing any intended or unintended changes which are not absolutely necessary to perform the specific process in the given situation. A full Cold Chain audit trail could be:

The International Air Transport Association (IATA) has recognized that the pharmaceutical industry tries to avoid air transportation whenever possible. “A majority of all temperature excursions that occur happen while the package is in the hands of airlines, airports and their contractors.” More than 15 years ago, IATA initiated the Time and Temperature Working Group (TTWG) that developed the Temperature Control Regulations (TCR), a guide designed to enable stakeholders involved in the transport and handling of temperature sensitive products to meet the requirements of the pharmaceutical industry. This guide raised the awareness of these issues within the entire logistics industry. The group also introduced the “Time & Temperature Sensitive” label, which still today remains a well-known standard.

However, IATA has recognized that a label was not enough and created the Center of Excellence for Independent Validators in Pharmaceutical Logistics (CEIV Pharma). The CEIV Pharma certification program aims to support the air cargo supply chain in achieving pharmaceutical handling excellence and increase safety, security, compliance and efficiency by the creation of this globally consistent and recognized pharmaceutical product handling certification. Airports, trade lanes, service providers, and entire networks can now be certified by training personnel, improving equipment, and introducing checklists and processes.

GMP and GDP clearly require qualifying all equipment used to produce, store, and transport temperature sensitive pharmaceuticals. This obviously includes data loggers. As a pharmaceutical company using a data logger and/or a cold chain database, you need to proof that it fulfills the intended purpose.

Qualification is the documented proof by the Market Authorization Holder (MAH), that the Cold Chain monitoring solution fulfills its intended purpose: documenting a release decision.

The company using the Cold Chain monitoring solution must perform the qualification job itself and individually on the specific process. The qualification documentation of the Cold Chain monitoring company is a popular target during FDA audits. Qualification of a Cold Chain monitoring solution in a specific situation can be kept simple, if the supplier qualifies all elements of the solution (i.e. hardware, software, services) beforehand, and provides profound documentation to support it. Although qualification is the better term, this activity is often called “component validation.”

Each component used in a Cold Chain monitoring solution must be validated/qualified by the supplier. They must provide documented proof that each component fulfills its intended purpose.

Comprehensive qualification support packages provided by data logger vendors include:

The supplier typically provides guidance during the qualification and is open for audits. During audits, the detailed V-Model documents can be inspected.

GMP and GDP standards define that pharmaceutical products must be stored and transported according to the required temperature conditions mentioned on the drug label to ensure compliance. Every excursion from these temperature conditions must be documented. The monitoring system should support the user in creating automated excursion reports to which the user can still add certain information. The following procedure gives an example on which questions a Quality Manager should ask, once a temperature excursion has occurred.

ALARM GOES OFF

A temperature excursion triggers an alarm. The alarm can be seen on the sensor itself or the dashboard display and can be sent out via email or SMS text containing an excursion report with the following information:

Where did the alarm go off? Which facility, container or sensor had an excursion?

When did the temperature excursion occur and when were the drug label conditions re-established?

How long has the product been exposed to temperatures outside the drug label conditions?

What was the highest/lowest temperature measured?

Risks? Is it likely that the core temperature of the product has been affected, thus damaging the product?

Severity? Is there sufficient stability budget left to justify a release of the product or is a product recall necessary?

Corrective actions needed? What is the cause of the temperature excursion and does it have to be corrected? Do people need to be informed about the findings?

Preventive Actions needed? In case of high-risk and/or repetitive errors, which preventive actions can be performed in order to avoid a repetition of the event? Are changes implemented?

A dashboard gives a brief overview on the current status of each sensor. The sensors can be grouped in a meaningful way or placed on top of a floor plan to illustrate their physical location. The dashboard should show the currently measured value, show the alarm status, and give further meaningful information on the technical status of the sensor. The benefits of a dashboard are:

Besides a clear alarming mechanism, it is vital to have periodic reporting on all sensors on a system. Reports can serve different purposes and therefore, contain different content. If the report serves as an archive of data, it should be in compliance with the ISO standards for long-term archiving. If the report is sent to customers, it might be beneficial to combine various sensors together, providing a comprehensive overview of the customer's project. Examples of regular reports may include:

Archiving is not clearly defined in GxP regulations and is left open to interpretation. Many people have the unrealistic idea that once data is archived, it should be available forever in the same way it was generated. Data archiving is the process of "moving data that is no longer actively used to a separate storage device for long-term retention. Archive data consists of older data that remains important to the organization or must be retained for future reference or regulatory compliance reasons.” As a result, "archive data" has a different form than "process data."

Process data is “fresh data” which is used to execute business decisions (e.g. data of a product, mean kinetic temperature calculation of a stability study). The service provider must ensure that, for two years, process data is available electronically for visualizations (e.g. zoom, overlay); statistics (e.g. calculate MKT); reports (e.g. release decision); and exports of the data (e.g. to a higher batch management system). Furthermore, it must be possible to add comments related to the data in the system.

After the first two years, the data is typically not needed in business processes anymore and its location and form will be changed to archive data. The service provider must ensure that archive data is available for at least 10 years and fulfils the following requirements:

If you work with pharmaceutical products and want to comply with GMP and GDP guidelines, you must know more about Qualification.

Get more insights with our experts about temperature monitoring of pharmaceutical products.

we prove it.

Copyright © ELPRO-BUCHS AG 2024. All Rights Reserved.